Januar 2025

Parallel planning in SAC – Who will win, the first or the last one publishing data?

Background – Private and Public Versions

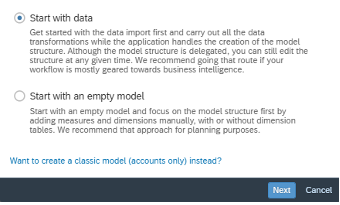

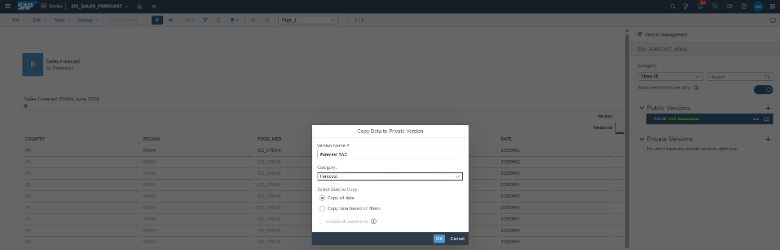

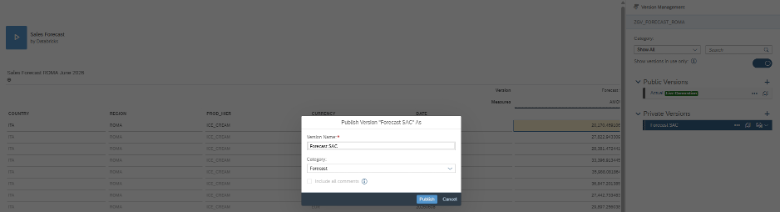

When you open a SAC model in input mode all data is copied into a private version. Changes are stored in the private version until you publish the data.

All this is well described in the following learning journey:

A lot of questions concerning this topic are already answered in the following blogs:

FAQ: Version Management with SAP Analytics Cloud (Part I – Basics)

FAQ: Version Management with SAP Analytics Cloud (Part II – Versions in Action)

How does SAP store changes and how can I trace them?

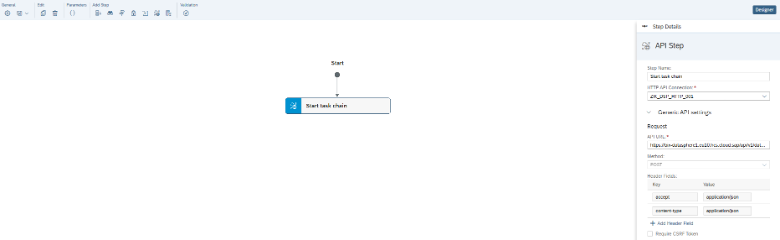

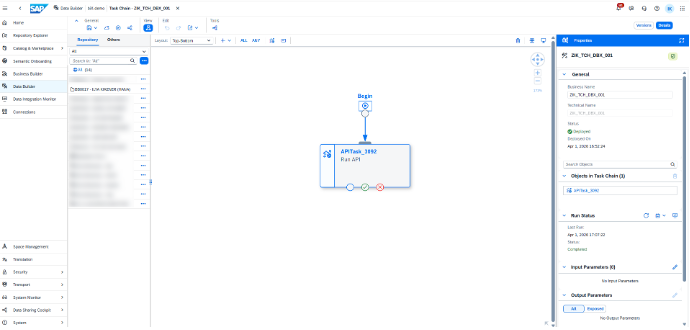

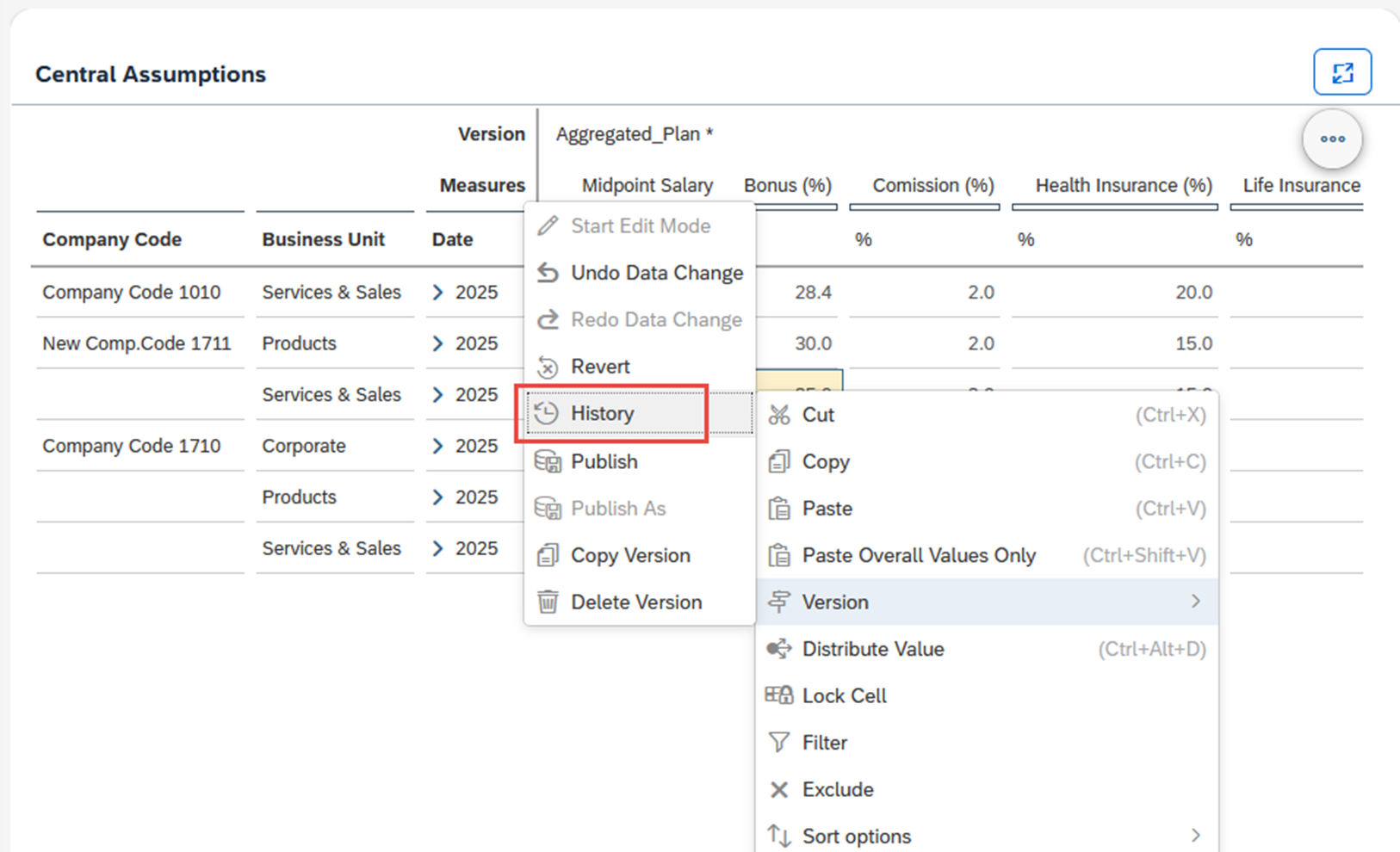

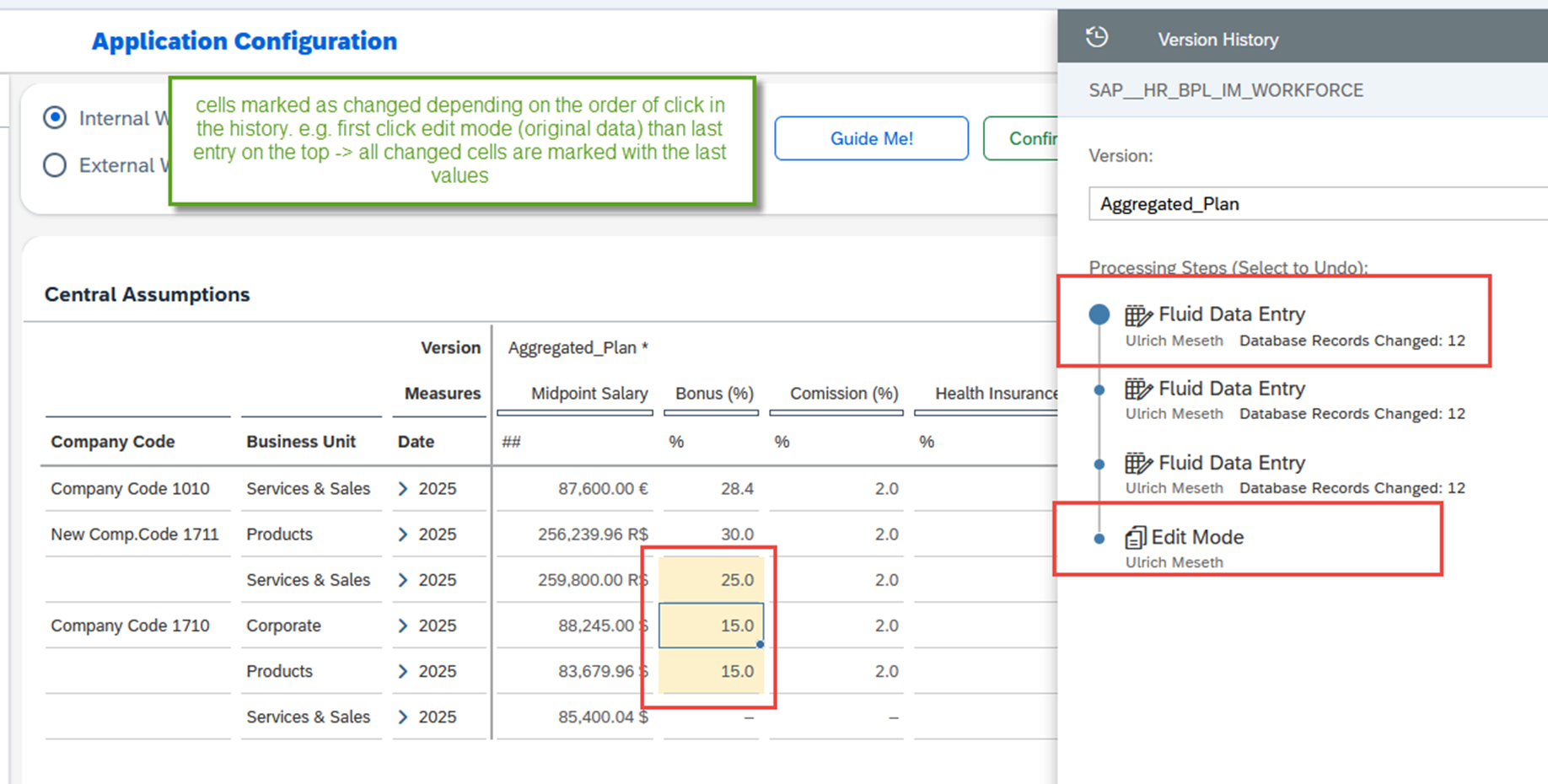

Now you will see for each entry cycle a “Fluid Data Entry” step. Depending on which step you click, the system will highlight the cells you have changed. Here you have the option to revert a single entry step as well.

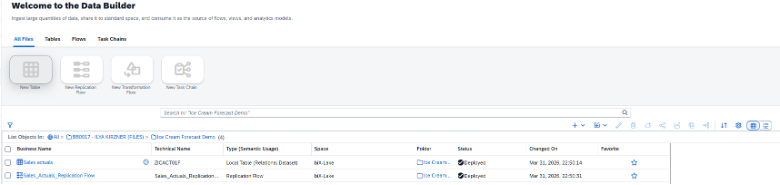

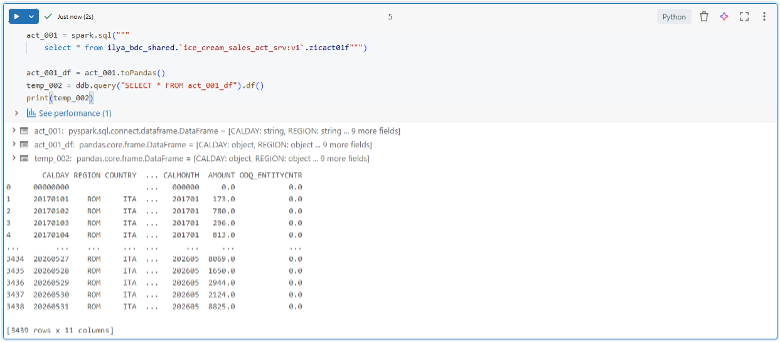

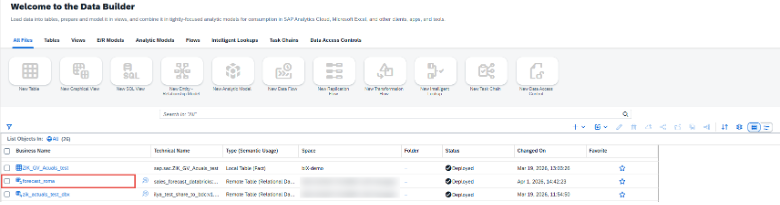

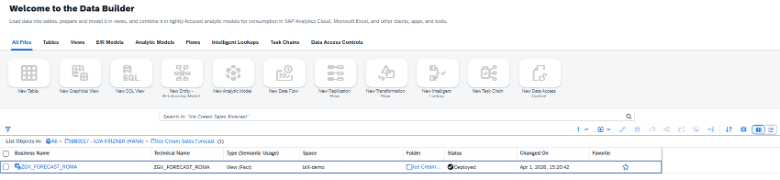

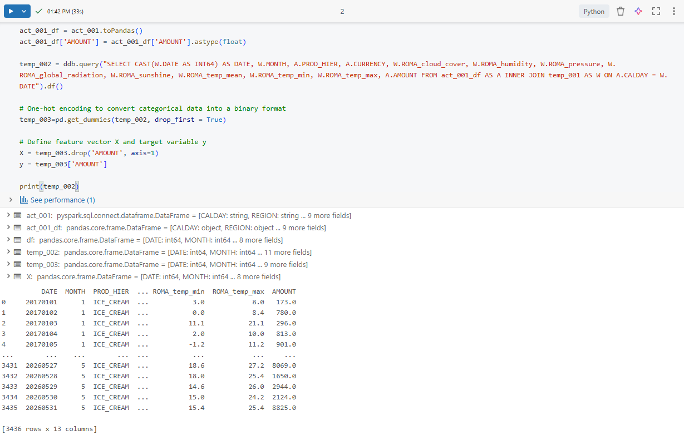

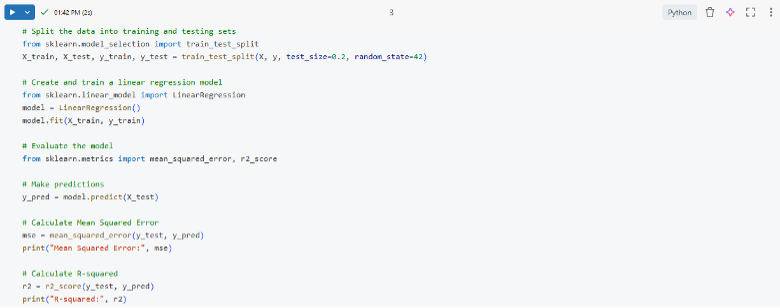

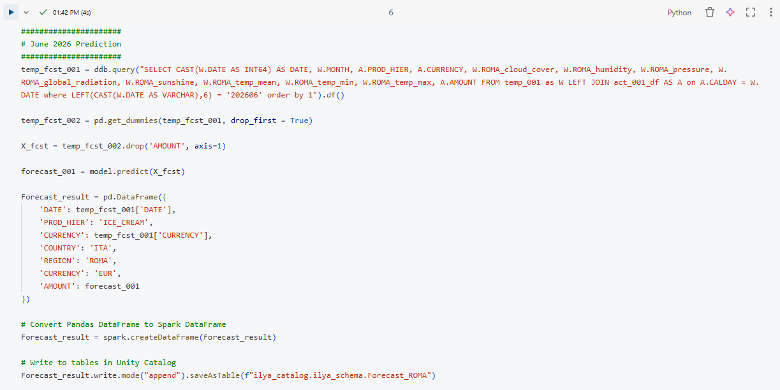

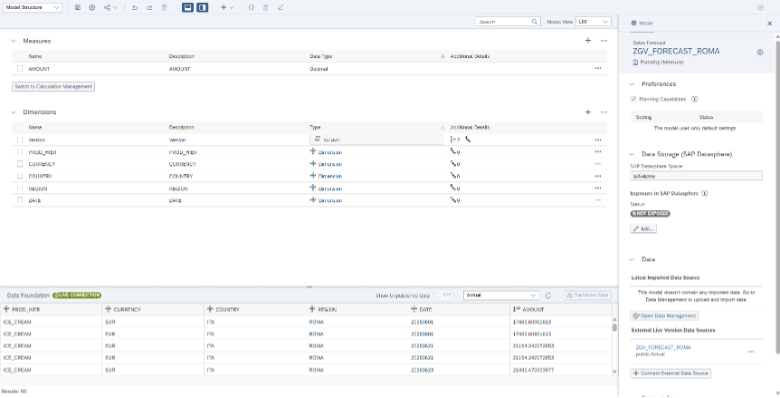

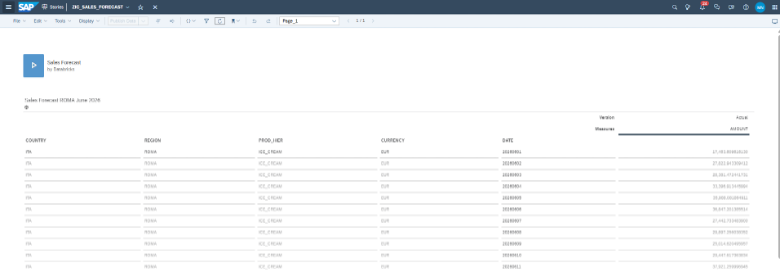

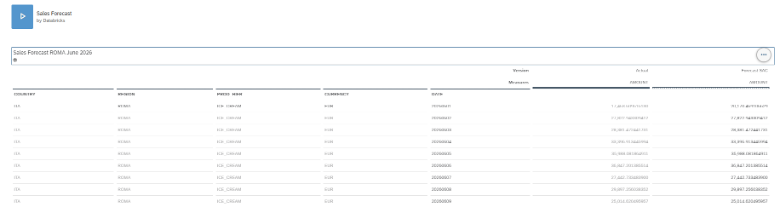

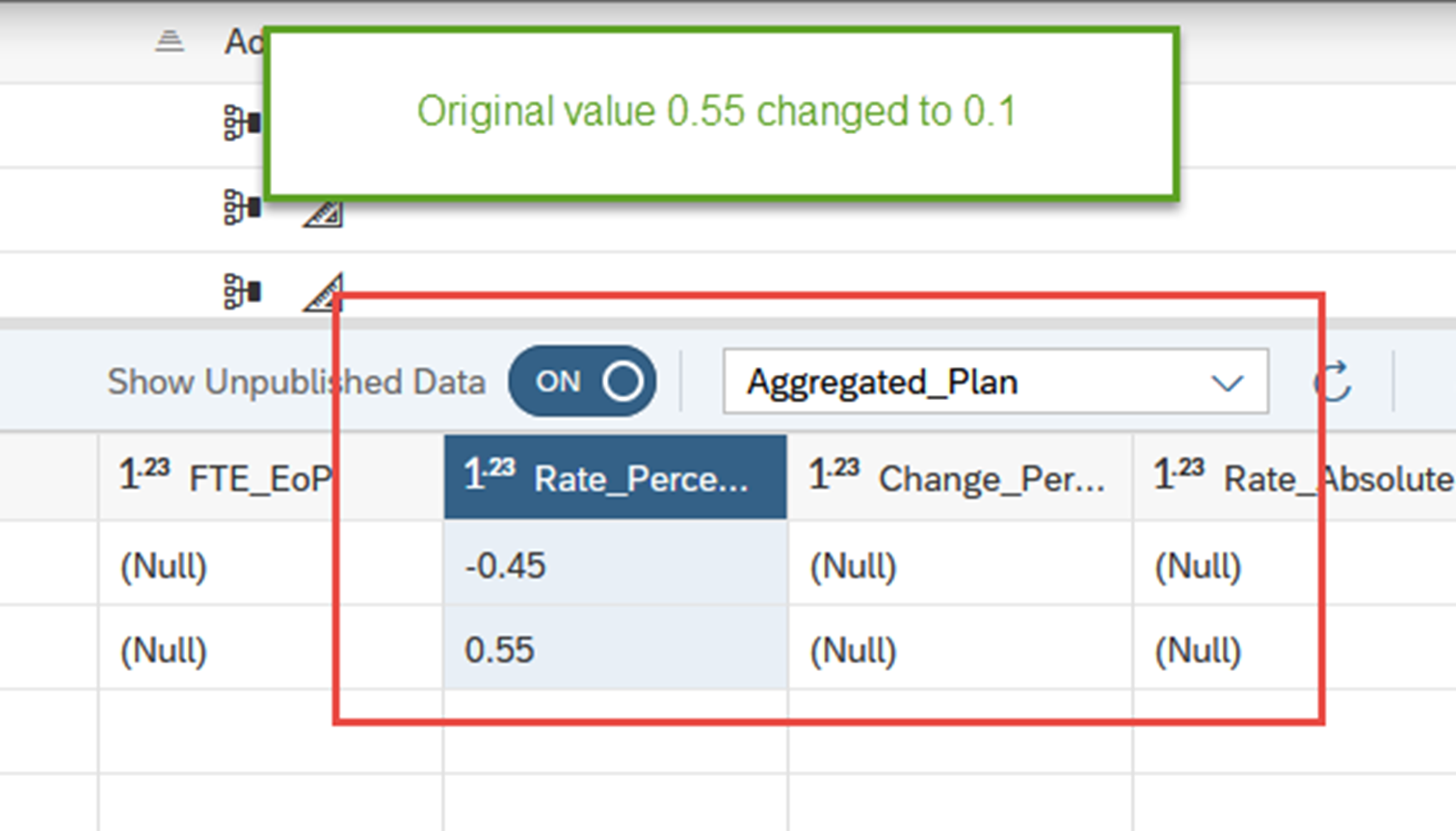

Now let us have a look at how SAP stores the data. Data can be seen in the Data Foundation part of the data modeler. There you have a toggle button to switch on unsaved data. Default is the status off showing data in the public version only. If you toggle to see unsaved data, you will see a line for each fluid data entry step containing the delta value created. Thus the total of all values for a certain selection is the value you will see in the report.

You will see unpublished data and version history only for your own user. No chance to see unpublished values of any other user.

Data checks during save

What is SAC now doing when data is saved?

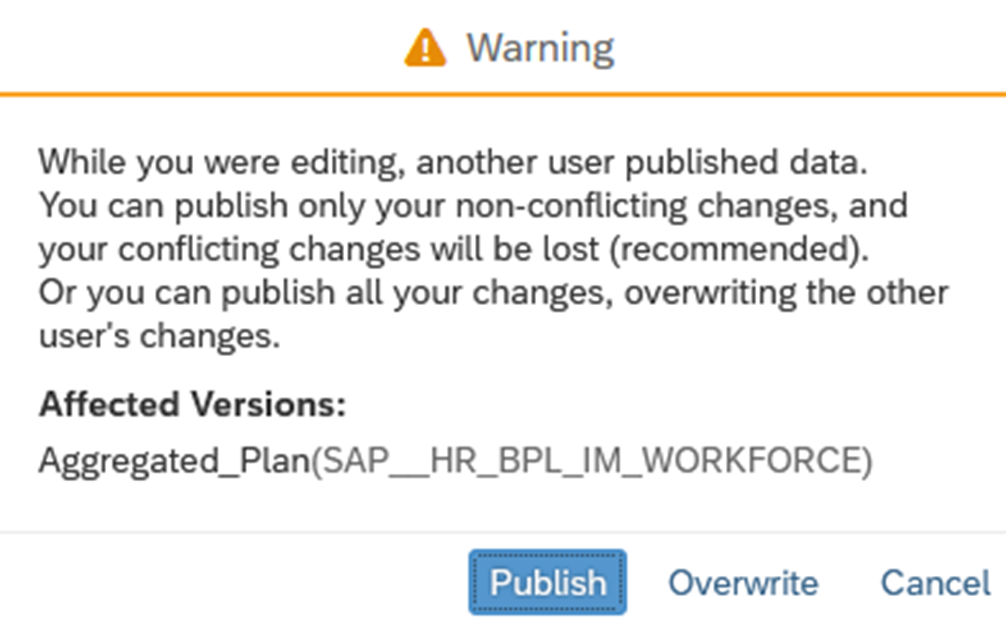

SAP will compare the original value in your version history with the current public version. If values are unchanged data will be published. If values are not identical you have to decide whether to publish only your non-conflicting changes or to overwrite the other user changes:

There is no way to know which are the conflicting changes (or at least we have not found a simple way to find out but we have compared all values you have changed with the current public version on a second screen).

If you would have started your planning after the other user has published the data, no warning occurs and the other user changes are overwritten. Of course, this is not parallel planning, but it may be only a second difference, if you open before or after your colleague has pressed the save button. Especially if you work with a splash down you may not realize a small change on a lower level.