Introduction

In diesem Blog-Beitrag werden Ihnen einige Details über SAP-Rollen und Berechtigungen mit Schwerpunkt auf dem BW-Produkt vorgestellt. Rollen werden Benutzern zugewiesen, um ihren Zugang zu T-Codes, Menüs, Berichten, Programmfunktionen und dergleichen zu steuern.

General Concept of SAP Roles and Authorizations

First of all, there are some key Transaction Codes (T-Code) to access to built-in tools provided by SAP. Before moving to Role Creation and maintenance I will briefly cover reasons why we need to have Roles and Authorizations in a Data Warehouse.

In SAP BW systems, users often access to data through queries and reports developed by skilled consultants. However, roles are not only there for limiting access to data. They are also important for consultants, developers, coders and basis administrators who need access to the same very system.

Let’s start with the user side of the things. In a company there may be several departments from finance and planning, sales, human resources, CRM, logistics, and production others. Why would a colleague from sales would be allowed to access information from financial reporting or HR? To ensure data integrity and security we must deploy roles and authorizations. For also complying with consumer data protection laws, a global restriction to access sensitive data within a system is a highly required component for all Data Services.

However rules and authorizations are not only used for restriction to access sensitive data. We also use them to enable structural access limitations for technical development and maintenance for SAP Consultants regardless of whether they are internal or external. This way we can establish a common ground for technical development and flawless run time in SAP BW product. In short, who gets to access HANA Data Base Level objects and tables for READ, WRITE, MODIFY and DELETION or CREATION properties is maintained well within the system. Also for other objects such as reports, views, InfoAreas, DataStore Objects and such can be limited to user groups.

It is vital to remember that, global naming conventions and authorization rules must be well defined from the start. Elsewise, it will be very hard to track down every ticket requesting specific roles and authorizations from end users or technical and functional consultants. There are also best practices to follow and things to avoid.

SAP BW Focused Authorizations

Let’s start with basics in an SAP BW system.

There are different concepts for authorizations in SAP BW.

1. Standard Authorizations: These are based on SAP standard authorization concept. They are required by all kinds of users who model or load data and who needs access to planning workbench or to define queries.

2. Analysis Authorizations: These are not based on the SAP standard authorization concept. They are required for users who want to display transaction data from authorization-relevant characteristics for queries.

SAP BW Focused Roles

For the Roles we can think in 3 separate types:

1. Single Roles

2. Derived Roles

3. Composite Roles

Authorization objects can be assigned to users or roles. It is easier to control and maintain the authorization process if the objects are assigned to roles, and roles are assigned to users.

How to create a new Authorization Object

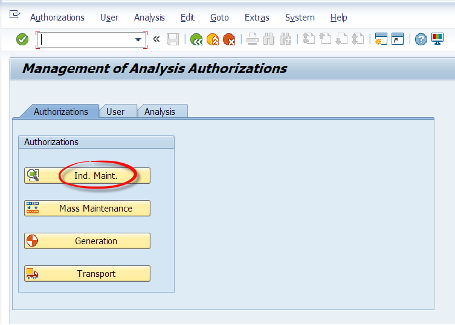

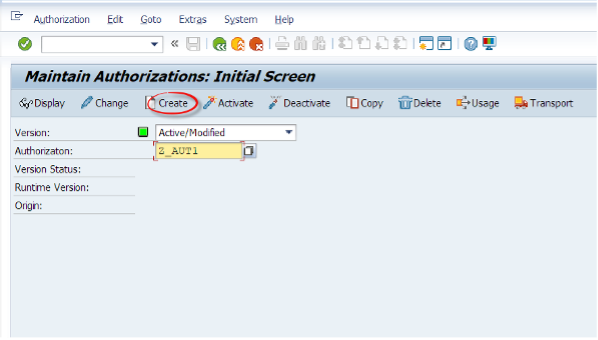

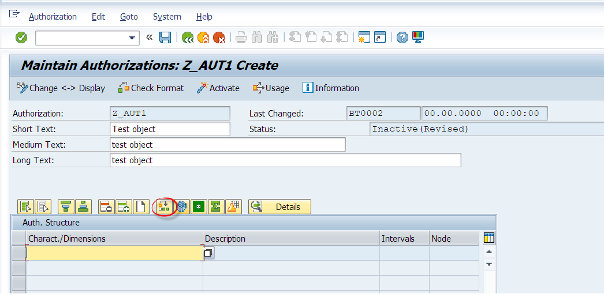

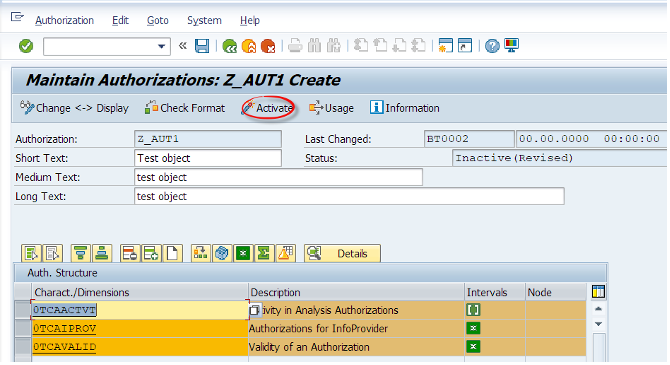

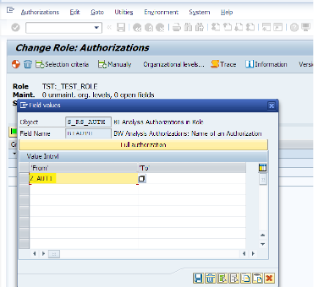

We type the RSECADMIN T-code and from there we will click on the Authorizations tab. Then we will click on Ind. Maint. Then we will click on Create button and give Z_AUT1 as a name to our new object.

Then we will add pre-defined special authorization characteristics to this list. 0TCAACTVT (activity), 0TCAIPROV (InfoProvider) and 0TCAVALID (validity).

A user must have at least one assigned authorization object which includes these characteristics. Elsewise they will not be able to run queries. SAP recommends adding them to every authorization object for transparency, even though it is not a requirement.

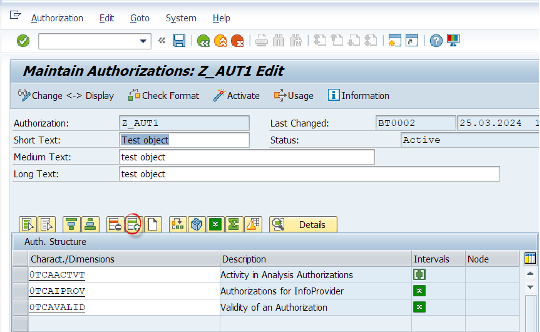

In the default setting, values in the intervals:

-Read (03) is set as the default activity

-Always Valid (*) is set as the validity

-All (*) is set for the InfoProvider

You also need to assign the activity Change (02) for changes to data in Integrated Planning.

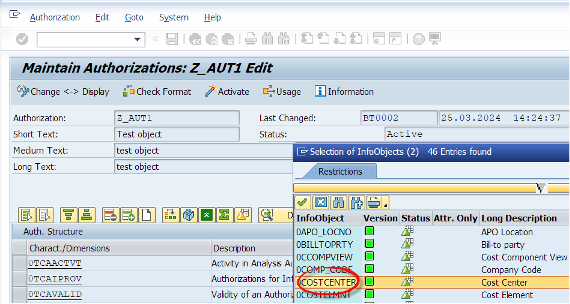

Lets add a row as shown below and then lets select a characteristic which is related to authorizations.

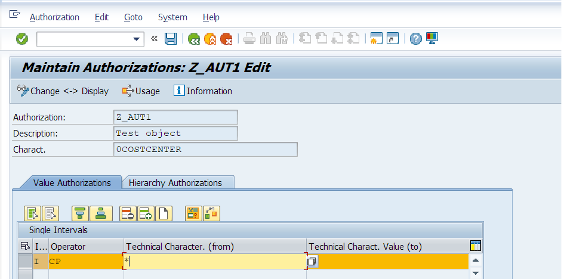

Now we have added 0COSTCENTER to our authorization object with access to all cost center entries using the * character.

How can we assign this authorization object to a user ?

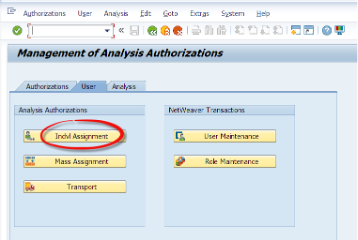

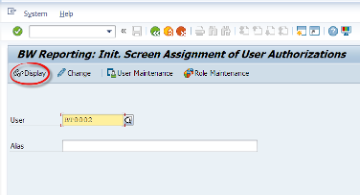

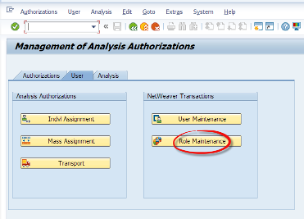

Let’s go to RSECADMIN T-code. Under the User tab we will click the Indvl. Assignment button.

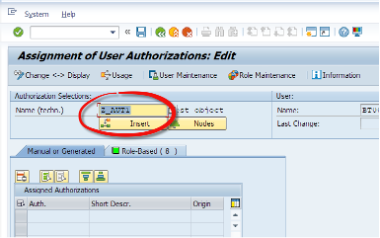

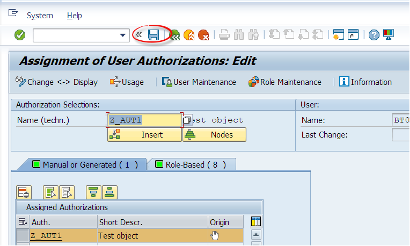

After entering the user name, we will git the change button. We enter the technical name of the authorization object we have created. Then we can press the Insert button to manually add this object to the user. Then we hit the Save button.

As mentioned before, this is not a preferred way. Let’s see the other way.

We will assign this object to a role - Let’s first create a role.

How to create a Role in SAP BW?

Now lets move to the Role Creation. We have Authorization Objects, we assign them to Roles or Users. We also can assign the roles to the users which could be easier to maintain.

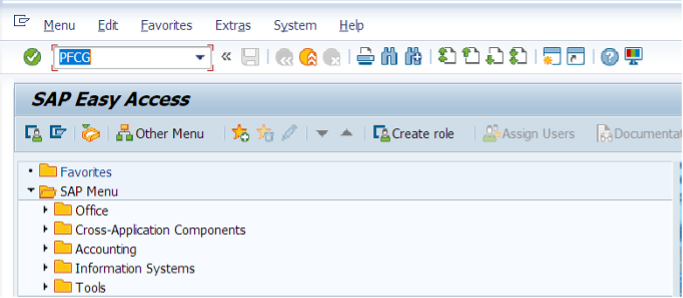

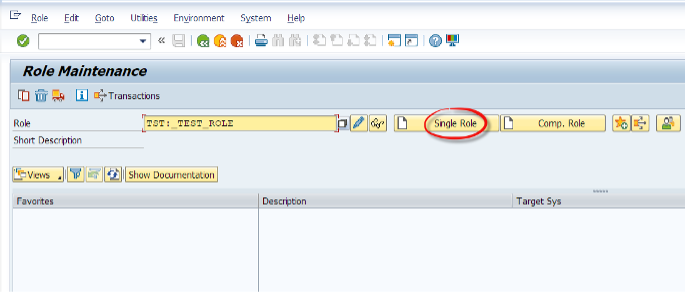

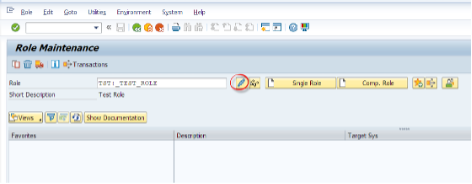

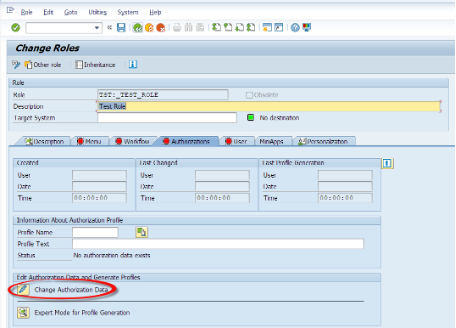

Now we can use RSECADMIN or PFCG to create a role.

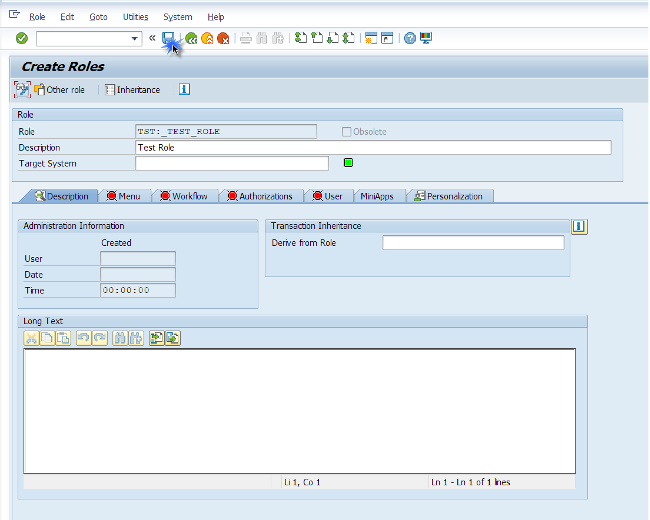

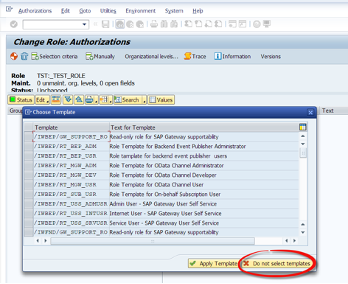

A system prompt will ask if we want to apply any generic templates. For the sake of simplicity we will skip this process.

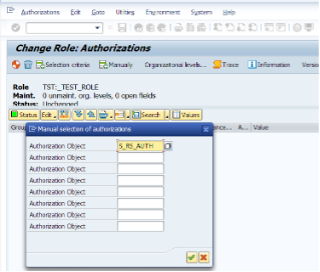

There is a system object to store analysis auth. objects which is called S_RS_AUTH. For every authorization object we create, must be stored under this object.

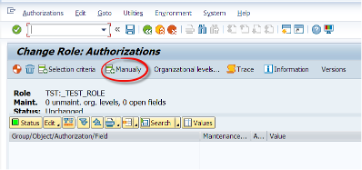

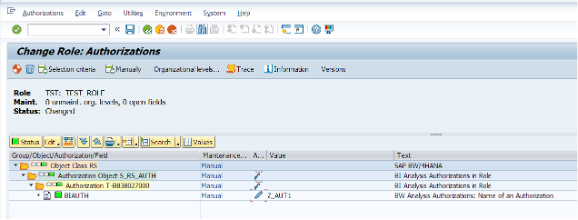

We will manually assign this object to our custom role. Then we will assign this role to the user for easier management of security.

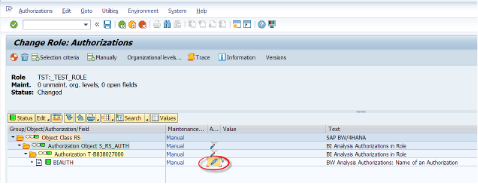

Now we are adding the authorization object we have previously created under the S_RS_AUTH object.

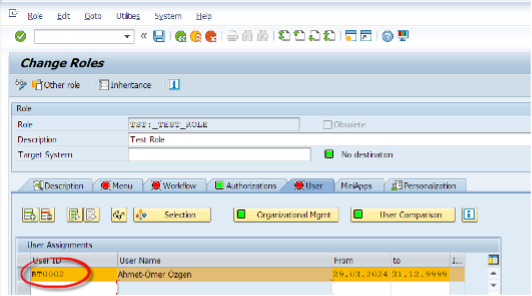

Now we are assigning this role to the user

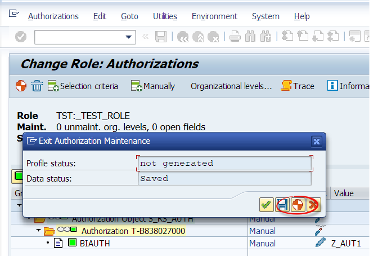

How to generate the Authorization Profile

Before saving the assignment to role, we must generate the authorization profile. To do so, we need the S_USER_PRO authorization in our user as a prerequisite.

What SAP says: “You must generate authorization profiles before you can assign them to users. An authorization is generated for each authorization level in the browser view, and an authorization profile for the whole role as represented in the browser view.”

By clicking the Generate button, we are generating the related authorization profile.

Notes

Notes: when assigning authorization objects to a role, if we do not know about the objects, we can click on the Selection criteria button. When we click on the red minus icons they turn into green plus icons then we hit the Insert Chosen button which adds them to the role.

We can add authorization objects which lets users to access Info Cubes, ADSOs or related BW objects. We can modify user access to READ, MODIFY and such from the * Activity part under the added authorization object.

Written May 2024