Starting Point

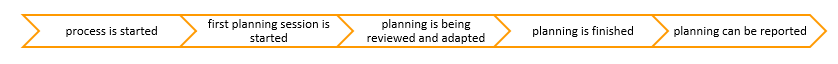

The following example serves to illustrate the features of the calendar. A bicycle manufacturer, where the sales managers of the individual bicycle divisions (mountain bike, road bike, etc.) must make a sales forecast. The process should look as follows:

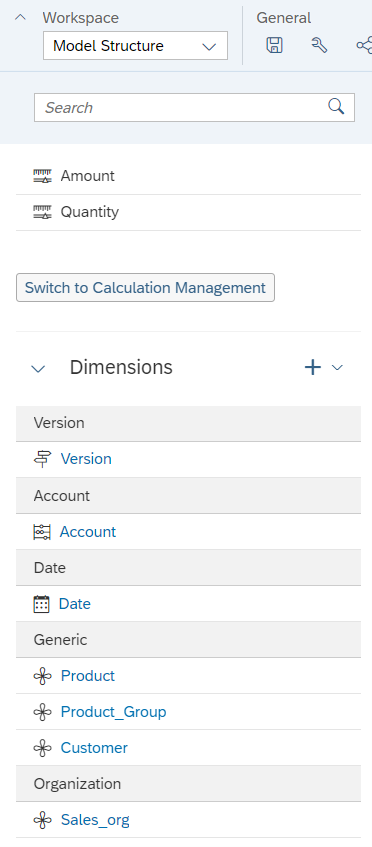

The starting point for planning is a model that is ready for planning.

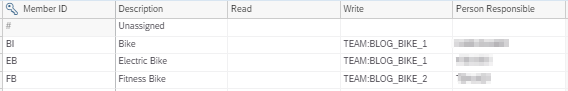

The sales managers are assigned to individual product groups and plan these accordingly as part of a team. The individual planning tasks are restricted via the product group. Responsible persons can be defined in the master data behind the product group. Since this information is copied when the calendar is created, we recommend that you do not define individual users here, but groups. The person responsible is needed in the further process.

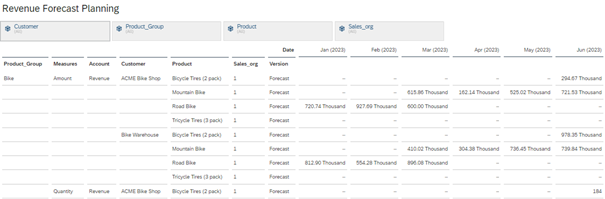

The planning should first take place in a story. This contains only the planning model with an input-ready table.

This should be the starting point of the planning process.

Modelling the planning process in the Analytics Cloud

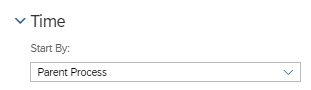

The planning calendar offers various building blocks, so-called tasks, from which the process can be modelled. Working files, i.e., stories or analytical applications, as well as descriptions and personal notes can be stored in all tasks. A task needs a starting point, which can always be set in terms of time. Some tasks can also be started via the parent process or in dependence on others. The parent process, under which all other tasks lie, is defined by the start conditions of the tasks.

An overview of the individual tasks:

- General Task: This task transfers stories or analytical applications directly to persons or teams with a set filter area, responsible viewer or reviewer, a time frame and other files that could help with planning. Reporting masks with the last planning round or similar are plausible here.

- Review Task: These transfer the results of a planning round for approval. The review task is always dependent on another task or a parent process.

- Composite Task: A combination of General Task and Review Task. If the process is not to be too granular, the Composite Task can be used instead of the General Task and Review Task. These start either at a specific time, depending on another task or a parent process.

- Process: Serves as an organising element for the process. Has the same capabilities as a General Task.

- Data Locking Task: For automatic or manual setting of data locks.

- Data Action Task and Multi Action Task: For executing data actions.

- Input Task: An old task that is no longer used.

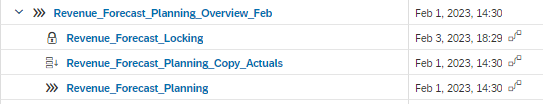

An initial structure of the process could look as follows.

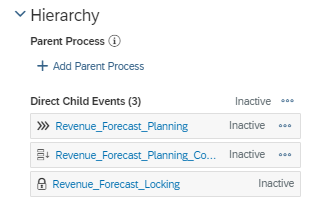

The first process serves as a sorting process and has no tasks. It is the parent process for all underlying tasks.

The Revenue_Forecast_Planning Task is where the planning and review rounds are to take place. The second task is a data action that copies the actuals from the previous year into the forecast as a basis for this year's forecast. This task starts after the parent process has started.

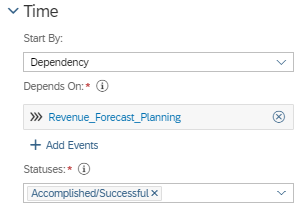

The Revenue_Forecast_Locking Task sets a data lock on the data areas that are not to be changed after planning. The execution of this task does not depend on the Parent Task, but on the Revenue Forecast_Planning Task. As soon as this task is successfully completed, the data lock is executed.

With these four objects, we have a basis of events that build on each other and replicate the planning process.

- start the overlying process.

- automatic execution of the data action and copying of the actuals into the forecast version

- start of the actual planning.

- data lock

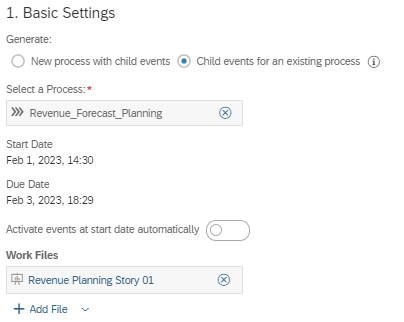

Now the Revenue_Forecast_Planning process must be filled with content. The Event Wizard generates all the necessary input tasks. Our planning story is the basis of planning for all tasks in the process.

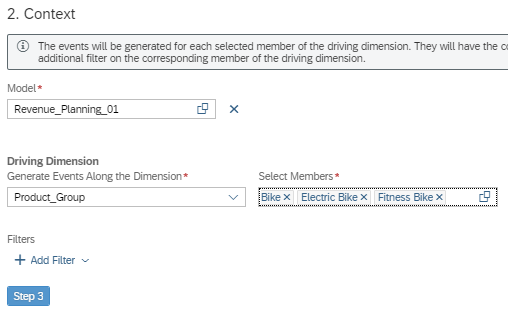

Planning is subdivided into product groups, which is why we choose this as the Driving Dimension.

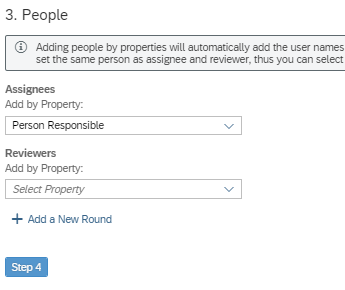

The "Person Responsible" defined in the product group dimension are the processors of the tasks. The Reviewer is not necessary, the Review Task takes over this task.

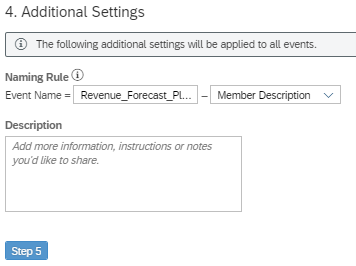

The exact event name can be defined in the fourth step. The description is sufficient for our example.

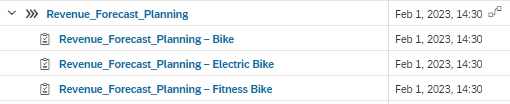

After a preview, the tasks are created automatically.

These tasks are time-dependent, the Event Wizard does not allow any other setting. However, the individual tasks can be changed for dependent start with the parent process.

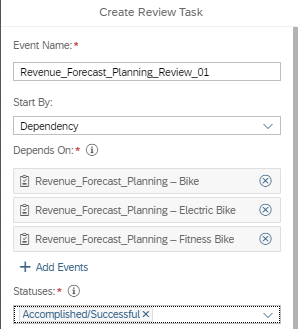

Individual reviewers can be added directly in the tasks. This is useful when each schedule reviews a different group of people. For this process, we use a Review Task that should start as soon as all planning is completed, as all results should be reviewed by the same group.

The Review Task depends directly on the Plan Tasks. As soon as these are completed, the review begins.

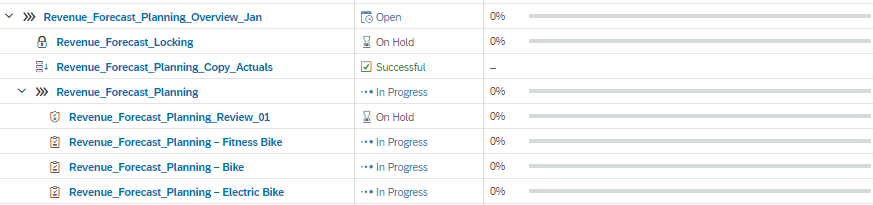

The whole process looks like this:

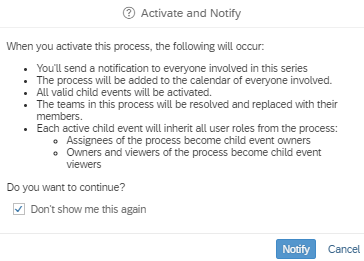

In order for planning to start, the process must still be activated.

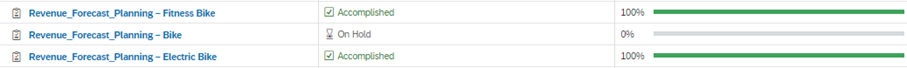

After activation, the data action for copying the data is executed and the tasks for planning begin. The success of a task is directly visible in the calendar, as is the progress of individual tasks. The target achievement can be defined by the user. For a task with several review rounds, the actual planning could fill the progress by 50%, the first review round by 25% and the second round also by 25%.

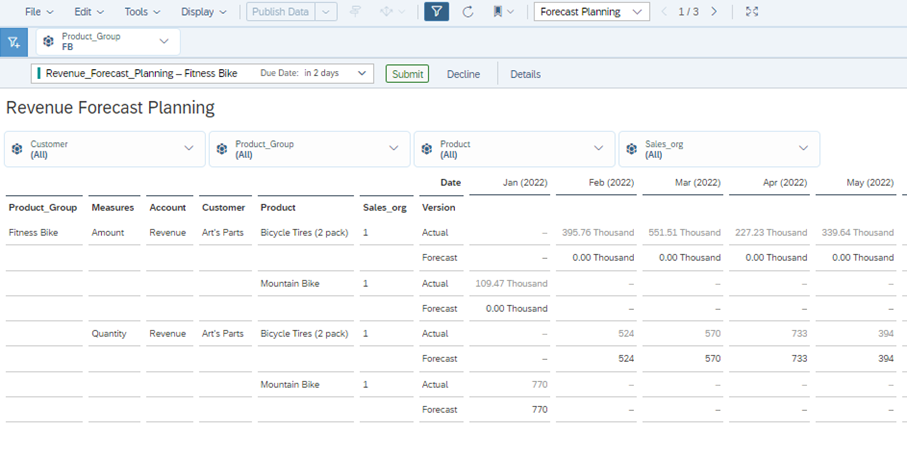

The planners can be made aware of their new task by e-mail or within the SAC. In both cases, a link leads to the planning story in which the defined planning tasks are waiting with the corresponding filters. When this story or analytic application is called up from the calendar, a new toolbar is visible that makes it possible to submit or delete one's own entries.

Submit completes the task and marks it accordingly in the calendar. Decline sets the task to "on hold" for the time being. A new agent can then be selected in the calendar or the task can be sent to the same person again. Submit always applies to all agents of this task, decline can only be executed for one or all.

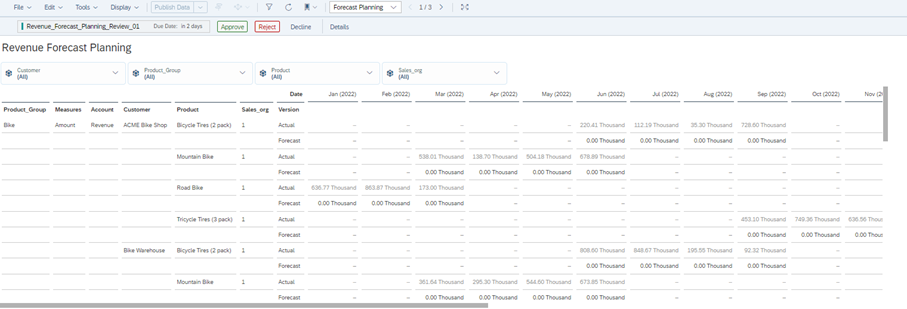

After the last task has been submitted, the review task begins. The layout of this task is similar to that of the input tasks.

Approve corresponds to Submit, Reject returns the task to the planners from before and the status is reset. Since we have a review task for all three plannings, they would all be open again. With Approve, this part of the planning is also completed. The parent process Revenue_Forecast_Planning can be Submitted in the calendar and thus declared complete. The submit takes place in the task in the calendar itself. Data locking automatically locks the defined data area after the planning process is completed. Thus, the entire planning is finished and ready to be checked in reporting.

Difficulties in using the calendar

Manual rework for each generated single step

Many manual steps are necessary to set up the process. In the example, only three product categories are split up; with a much higher number, the adjustment effort increases accordingly. The wizard for creating the tasks does not allow for setting the dependencies, which requires manual corrections in all individual tasks. There is a lack of convenience features that allow for easy modelling. The process modelled here could be used for January in this way, but it would first have to be copied for February and adapted if necessary. Again, this shows the increased manual effort.

Time-dependent representation, not process-dependent

The layout and the mandatory time dependency of all processes take a lot of getting used to. The view is always designed for the time period and not for the individual processes. When entering the monthly view, processes for the next month are not displayed, and when entering the annual view, performance suffers greatly as soon as many individual tasks are used.

No interlocking with the loading possible

Basically, a task for loading the planning model would be desirable. The import and export jobs can currently be set via the data management in the model without coding and are only time-dependent here. Event-based import and export based on calendar tasks would be desirable.

Analytic Applications expand the calendar possibilities

Some handling issues can be worked around within an analytic application using the Calendar API. Primarily, the approvals and submits that appear automatically when the calendar tasks are called can be integrated with the API itself. In addition, composite tasks can be created directly. However, this does not allow processes to be modelled and the tasks can only be switched depending on time.

Conclusion

In summary, the calendar is well suited for modelling simple planning processes. The collaboration options are a strength in the Analytics Cloud and come into their own here. However, changes in the planning or individual tasks must always be accompanied by experts. It is therefore questionable whether the calendar can be managed by the business user alone or whether it must also be supported by IT.

Contact Person