Motivation

Comments are very often required in planning applications. SAP offers two options to store comments:

- Using a document store

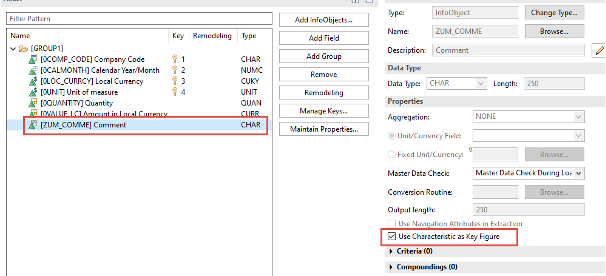

- Attributes as a key figure

A short introduction to each of the different options can be found e.g. in the following blogs:

https://www.nextlytics.com/blog/integrated-comment-functions-in-sap-analytics-cloud-and-bw4/hana

https://evosight.com/comments-in-bw-ip-using-characteristic-as-key-figure

We often use the technique using an attribute as key figure for the following reasons:

- Comments are directly visible in the query

- Reports can be built on comments

- Using a document store, comments are only visible as excel like comments and you have to click on each cell to read the comment

- Older and more stable solution

- Limitation to 250 characters is in most cases not critical

Now we had the requirement to show the history of comments in a report. As this is not an out-of-the-box solution, we want to share our approach with you.

Activating the audit trail in a composite provider makes it possible to show the history of changes for key figures without any programming.

Unfortunately, this does not work for comments as the audit trail will not show any request number for comments and no history of comments is shown.

Nevertheless, the history of comments is stored in the changelog table. This is the starting point of our solution. We will show you two options to provide this information from the changelog within a query:

- via HANA calculation view

- via ABAP CDS-View

Both options will end with an identical result. Which one you will use depends on your skills and / or restrictions to use HANA artefacts.

Of course, reading data from the changelog table in a report is not officially supported by SAP since SAP may change name or structure of these tables but works quite stable.

Logging changes via BADI BADI_RSPLS_LOGGING_ON_SAVE would be one more option. But as this option needs more development effort, we do not investigate this solution here. For more details see:

Why do Audit trails not work?

First, we want to make sure, we did not miss an option and find out, why audit trails are not available for comments.

When you activate the audit trail in a composite provider, the system just makes the request information of the data available in the report. As soon as you activate planning data, request information will get lost, and the audit trail will show no information. Technically, data will be moved from table 1 to table 2 of the aDSO (e.g for aDSO ### the tables are /BIC/A###1 and /BIC/A###2).

(see e.g. for tables of an aDSO and different types of an aDSO https://learning.sap.com/learning-journeys/upgrading-your-sap-bw-skills-to-sap-bw-4hana/working-with-the-datastore-object-advanced-_b1fbbc9a-e6ad-44bf-bfce-d5b2defa3ccb

But only for an aDSO of type data mart reporting will be based on a join of the inbound table 1 and table 2. For all other types of an aDSO reporting will be always only based on table 2 with the activated data!

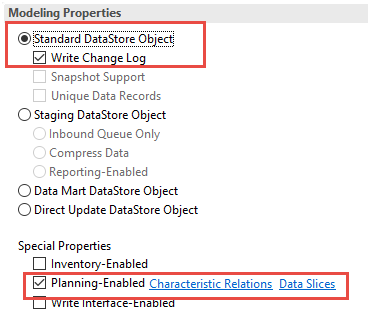

As the data mart requires all attributes as key fields, attributes as key figures are not possible on this type of aDSO. That is why audit trails are not available for comments.

But if you activate the change log for the standard data store object the history is available in table *3 of the aDSO including history of comments!

For more details see https://community.sap.com/t5/financial-management-blogs-by-sap/bpc-embedded-model-auditing-feature-in-adso-and-infocube/ba-p/13357497

Demo scenario

In our demo solution we have key figures and comments in the same aDSO. The recommendation would be to split and store key figures in an aDSO of type data mart with audit information and comments in a separate aDSO.

Our aDSO is a “Standard Data Store Object” with change log and planning enabled.

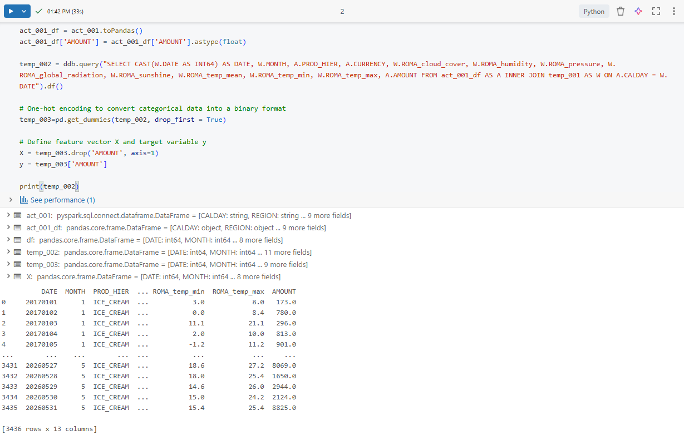

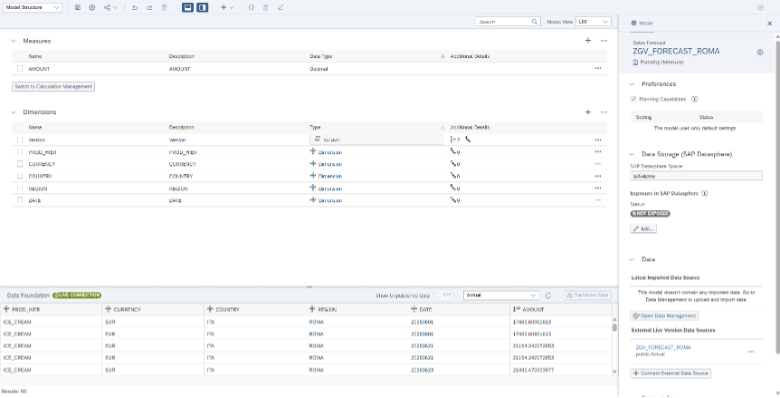

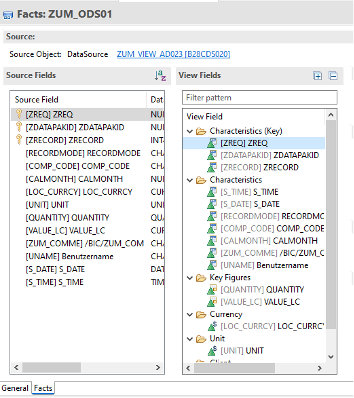

The aDSO contains the following field and one attribute as key figure

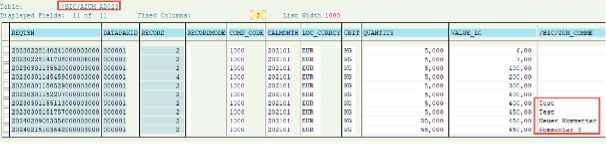

When you go to the changelog table, you see the historic comments. User, date and time of change is hidden in the request number (REQTSN) .

Solution via HANA Calculation View

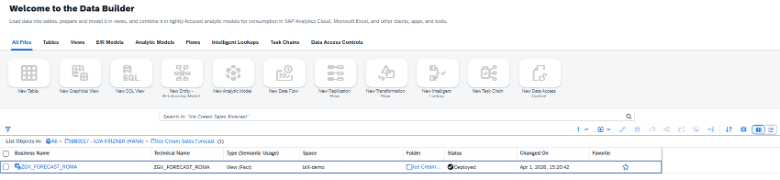

In this solution we use a HANA Calculation view that can be consumed on a composite provider.

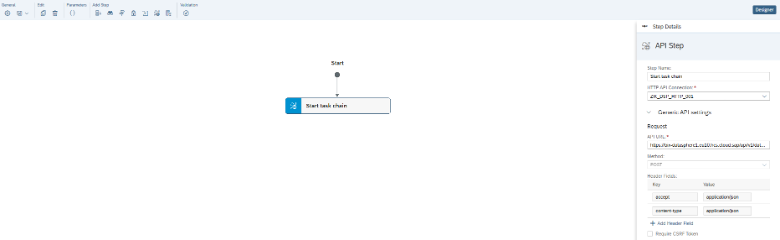

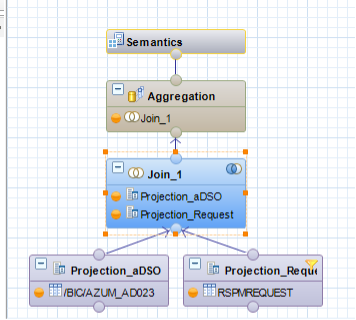

First, we create a calculation view to make the changelog - table information available and join as well table RSPMREQUEST to get user and time of the request in a handy way.

In addition, we do filter on storage CL (change log) in the request table (RSPMREQUEST) to get only one line per request number:

Details of the left outer join:

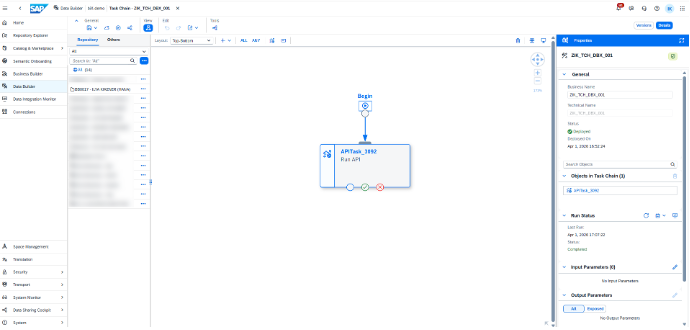

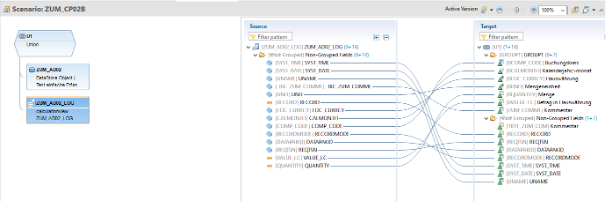

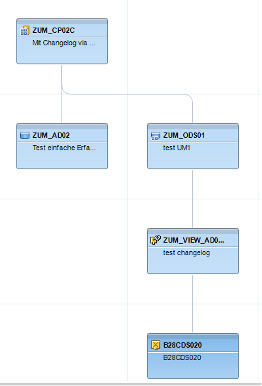

Now we create a composite provider with the original aDSO and our calculation view with the changelog.

We do map the fields of the changelog to the same fields as the original aDSO with the additional changelog – information. Attention, now you get key figures and comments several times in the report as we join a current reporting view with the historic view. But this helps to check changelog with current values.

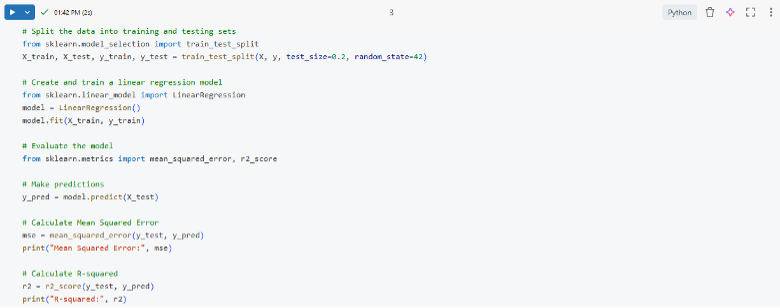

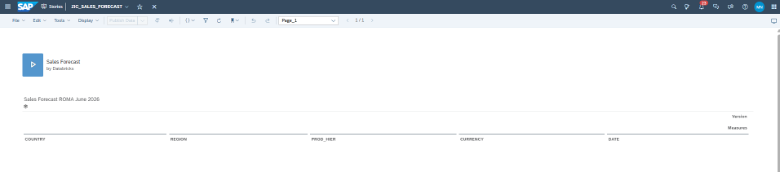

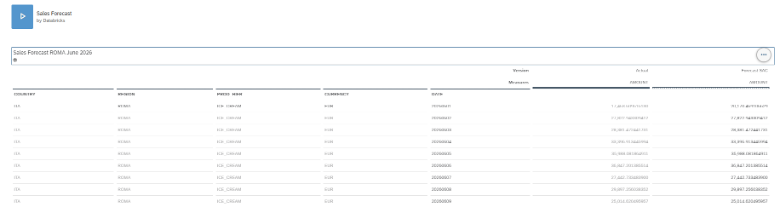

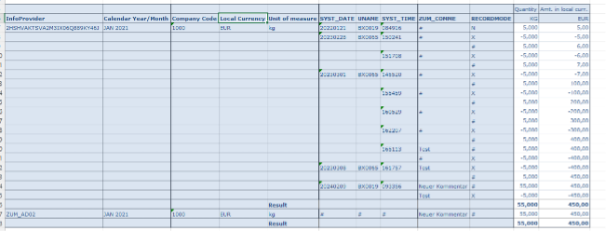

A query on top of this composite provider shows the history of the changes. Total of all changes for the key figures is identical to the current value in the infoprovider (last line).

This query can now be adapted to your changes, e.g. exclude Recordmode X if you want to see only the new comment and avoid too many lines.

Using ABAP CDS Views

In case you do not have the possibility to use HANA calculation views in the system you can achieve the same result as well with ABAP CDS-Views!

For general information how to use a CDS – View in BW see the following SAP Notes and the blog:

https://me.sap.com/notes/2198480

https://me.sap.com/notes/2673267

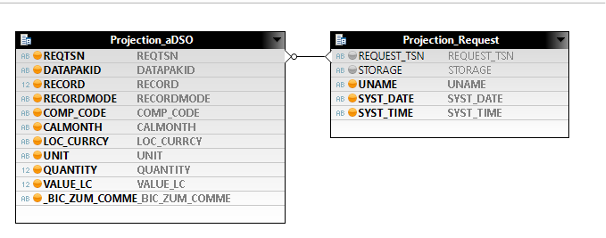

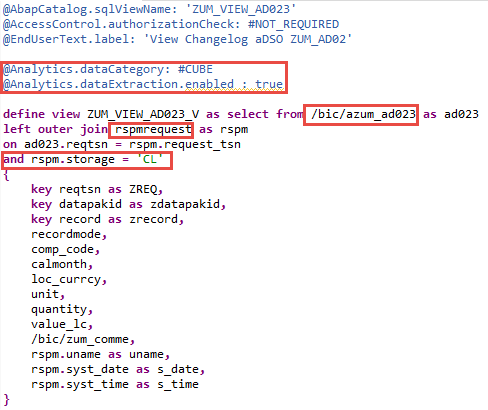

We will use the same tables (changelog table /bic/azum_ad023 with request information RSPMREQUEST) and join them within the ABAP CDS-View. Same filter is applied.

Make sure to add the following the annotations to allow the open ODS view to consume the data:

@Analytics.dataCategory: #CUBE

@Analytics.dataExtraction.enabled : true

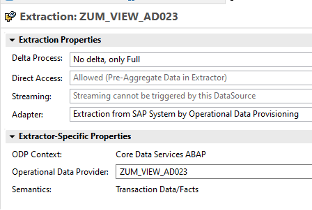

To use a the CDS-View we first need to create a data source based on the CDS-view and an Open ODS-View based on this DS consumed in a composite provider:

Datasource:

With the open ODS View

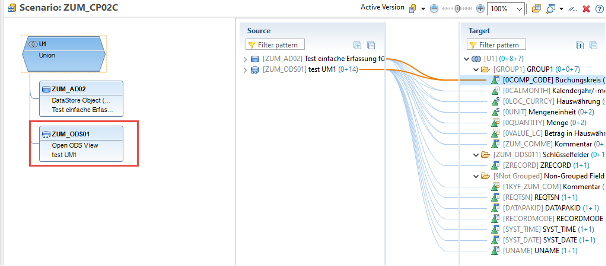

Now we use thn ODS-View together with our original aDSO in a composite provider and map all fields again

The data flow has now some more layers as the solution with a calculation view, but we do not need a HANA User!

At the end we can create a query with the identical outcome as in the solution with the HANA Composite provider!

Conclusion

We have shown you two ways to make the history of comments available in a query. Both are quite simple and provide the requested information. Which way you want to use is up to you.